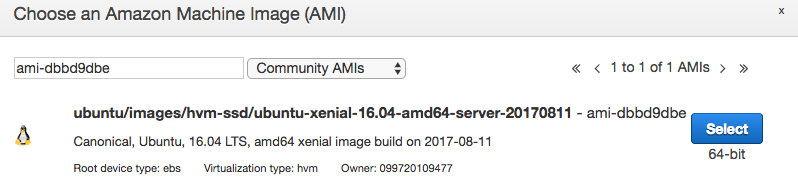

This means that you will need to build your own AMI. To get Docker to work in something like Amazon’s Elastic Container Service (ECS), you will need to expose the GPU to Docker. However, when you introduce GPUs into the mix, you will run into problems.įirst of all, TensorFlow Serving must be rebuilt with GPU optimizations turned on and compiled for the hardware you are targeting. It drastically simplifies your deployments and allows you to run on virtually any kind of hardware with Docker installed. To Docker or Not to Dockerĭockerizing your model is a great option if you are not taking advantage of any GPU specific hardware and are running on the CPU. This will involve building TensorFlow Serving for GPU instances. In this post, we are going to show you how to productionize a pre-trained model using TensorFlow Serving in AWS. In order to be successful at launching a production ready image classification API you need both Data Engineering and Software Engineering disciplines. What we observed was that under production loads, it was still not fast enough. This was a giant leap forward in terms of performance and accuracy than our previous attempt which utilized a COTS Machine Learning API.Īn important point is that TensorFlow Serving is by default compiled to run on the CPU. The model was served using a dockerized version of TensorFlow Serving and wrapped in a Python REST service. Our Data Engineering team trained a model using real estate images in order to infer what those images were of – bathroom, bedroom, swimming pool, etc. An Engineering Approach To Deploying A TensorFlow Based API on AWS GPU Instances

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed